In April 2026, the AI image world was jolted by a sudden “appear fast, disappear faster” event on LMArena. While users were still criticizing GPT-Image-1 for yellow tone bias, hand artifacts, and unstable text-heavy scenes, three anonymous models appeared and were quickly identified by the community as likely OpenAI’s unreleased GPT Image 2.

The spread pattern was classic: arena testers spotted unusual outputs first, then major voices on X amplified the story, and finally screenshots exploded across Reddit, YouTube, Instagram, and TikTok. Once people saw unusually stable text rendering and UI reconstruction in some samples, the conversation moved from “is this a new model?” to “is this the next major image production turning point?”

This article is based on public leak samples and X discussions, and focuses on three practical questions: what this leak signals about OpenAI’s image roadmap, which capability gains look meaningful versus still unverified, and how to split tasks across GPT Image 2, NanoBanana, and Grok Image in a 2026 workflow.

Let’s break it down. Start with the timeline.

GPT Image 2 history: from DALL-E to native GPT image generation#

OpenAI’s image trajectory makes the current leak much easier to interpret:

- 2021-2024: DALL-E 1/2/3 era

Creative range and controllability improved, but yellow tint, hand distortions, and unstable complex text remained persistent issues. - March 2025: GPT-Image-1 integrated into ChatGPT

Official messaging emphasized realism and world knowledge, yet many real use cases still broke in complex scenes. - December 2025: GPT-Image-1.5 iterative update

Generation became faster and in-context editing improved, but core pain points were not fully resolved. - April 4, 2026: suspected GPT Image 2 enters LMArena stress testing

Multiple anonymous variants appeared, spread rapidly, and were removed within hours.

The key shift is not just “more photoreal images.” It is deeper coupling between image generation and ChatGPT-level world knowledge. That is why expectations for GPT Image 2 are now about reliable semantic execution, not only visual style.

How the leak happened: full exposure timeline#

Based on public traces and cross-platform reposts, the event timeline can be summarized as follows:

- Three anonymous models appeared on LMArena

Codenames:maskingtape-alpha,gaffertape-alpha, andpackingtape-alpha. - Developers and investor accounts amplified the topic on X

Accounts including @levelsio and @blakeir openly questioned whether these were OpenAI test endpoints. - Leak screenshots spread across X, Reddit, Instagram, YouTube, and TikTok

The core claim was consistent: text rendering looked unusually sharp and multi-element scenes were much more stable than the prior generation. - All three models were removed within hours

The community interpreted the takedown as a pre-release gray test exposed too early. - Later reports mentioned short-lived

duct-tape-*aliases

These were seen as possible A/B or safety-tuning reruns, but no stable public access remained.

In short, this did not look like a normal public launch. It looked like a pre-release test that accidentally got watched by everyone.

Real-world leaked outputs: why people call this a generation jump#

Across public social samples and X discussions, four capability areas were discussed most often:

1) Photorealism and detail consistency#

- Skin texture, reflective surfaces, and highlight behavior looked more natural.

- Fingers, overlaps, and occlusion logic were more stable.

- Overall color grading looked less yellow and less artificial.

Many users summarized it this way: “For once, portraits don’t immediately look AI-generated.”

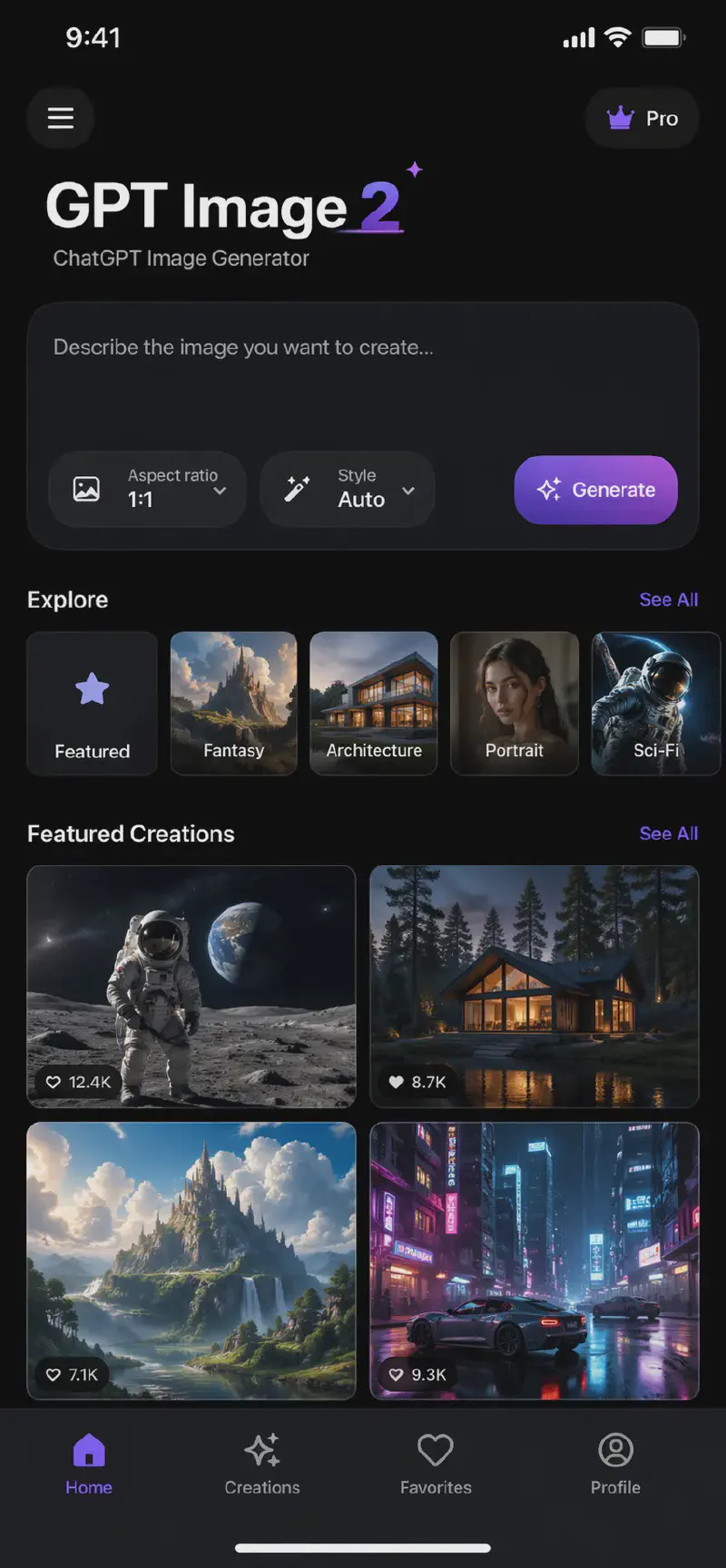

2) Text rendering quality improved sharply#

- Handwriting, interface text, and comic dialog balloons were more readable.

- Text looked integrated into scenes rather than floating overlays.

- Mixed-language scenes appeared to have fewer obvious failures.

This was the biggest breakout point because legacy image models commonly fail here.

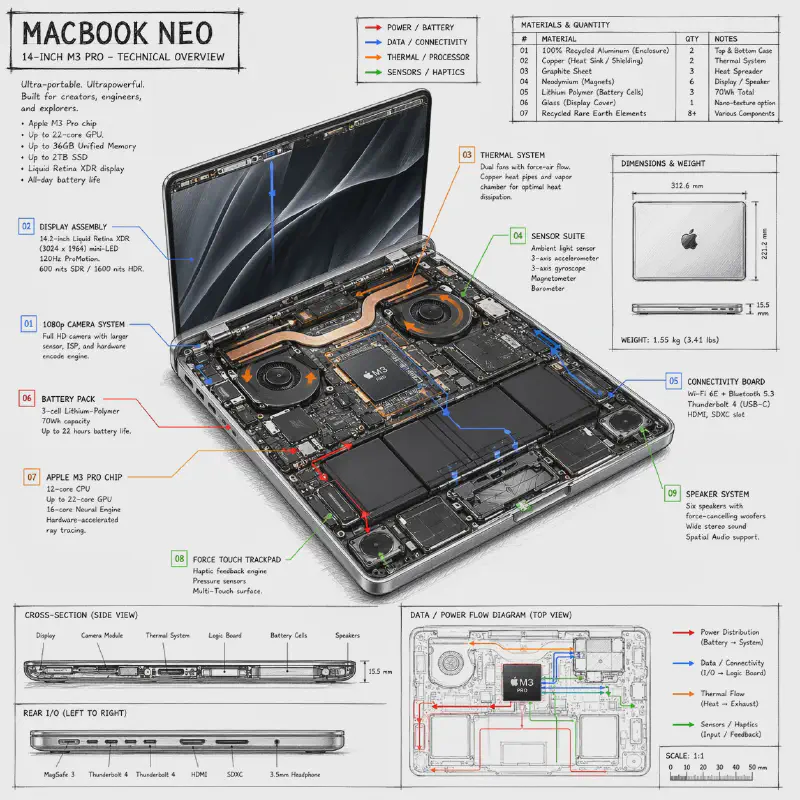

3) Stronger complex scene composition and UI reconstruction#

- In web and desktop mock scenes, layout hierarchy looked closer to real products.

- Multi-subject and multi-layer compositions held together better.

- In creative tasks (comics, game UI, pseudo-photographic posters), detail retention looked stronger.

4) Deeper world knowledge usage#

Community tests consistently reported more stable behavior in brand context, scene logic, and style priors. For creators, this suggests that shorter prompts can still yield richer implicit context.

Why the takedown increased “launch soon” expectations#

If this had been a routine prototype, it likely would not have prompted such intense attention and immediate removal. Combined with OpenAI’s previous gray-release practices, this pattern suggests final-stage stress testing before a formal rollout.

More importantly, the long-term advantage for the GPT image stack lies in workflow integration with ChatGPT, combining conversational context, knowledge application, image editing, and text generation in a unified process. This level of integration has the potential to reshape the competitive landscape.

Final showdown: GPT Image 2 (leaked) vs NanoBanana vs Grok Image#

Based on visible arena feedback, community samples, and platform positioning, this is a practical comparison (5-star scale):

| Dimension | GPT Image 2 (leaked) | NanoBanana (Pro / 2) | Grok Image (Grok Imagine) | Best fit |

|---|---|---|---|---|

| Realism / naturalness | ★★★★★ (photo-grade, stable hands and tones) | ★★★★★ (top natural and cinematic feel) | ★★★★☆ (strong, sometimes dramatic) | GPT Image 2 / NanoBanana tie |

| Text rendering | ★★★★★ (best for dense text scenes) | ★★★★☆ (excellent, occasional blur in extreme cases) | ★★★☆☆ (creative > precise text) | GPT Image 2 leads |

| Prompt following / world knowledge | ★★★★★ (strong with long constraints) | ★★★★★ (excellent grounding behavior) | ★★★★☆ (high creativity strength) | GPT Image 2 / NanoBanana |

| Generation speed | ★★★★☆ (expected medium-fast) | ★★★☆☆ (slower in highest quality mode) | ★★★★★ (clear speed edge) | Grok leads |

| Editing and resolution | ★★★★★ (high-res + strong edit potential) | ★★★★★ (top-tier high-fidelity editing) | ★★★☆☆ (more basic editing and output size) | NanoBanana leads |

| Style diversity | ★★★★☆ (stable realism and commercial style) | ★★★★☆ (strong, but mostly natural look) | ★★★★★ (fast style switching) | Grok leads |

| Price / value | ★★★★☆ (likely reasonable in ChatGPT ecosystem) | ★★★☆☆ (high quality can be costly) | ★★★★★ (low-cost high-speed profile) | Grok leads |

| Restrictions / safety posture | ★★★☆☆ (moderate policy constraints) | ★★☆☆☆ (stricter policy posture) | ★★★★★ (more open output range) | Grok leads |

How to choose: the most practical 2026 strategy#

If you are a creator, marketer, or indie design operator, task-based routing is more practical than betting on a single model:

- Fast iteration and high-volume ideation: prioritize Grok Image.

- High-fidelity edits and delivery-grade outputs: prioritize NanoBanana.

- High realism + strong text accuracy + complex semantic scenes: focus on the upcoming official GPT Image 2 release.

One-line strategy: 2026 image workflows are no longer single-model pipelines, but coordinated stacks across ChatGPT + Gemini + Grok. Better routing wins on quality, speed, and cost at the same time.

Closing thoughts#

The GPT Image 2 leak highlights a broader trend: AI image systems are shifting from visual novelty to production-grade reliability. As text rendering, world knowledge, and scene consistency reach higher standards, these models transition from creative tools to essential workflow infrastructure.

The key consideration is not which company delivers the most impressive demo, but which model provides stable results in real business contexts. Which model will you choose for your workflow?